Exponential Functions and the Number e

Starting from repeated multiplication and arriving at a universal constant.

The family of exponential functions (things of the form ) are ubiquitous in mathematics, physics and engineering. Due to the relatively simple set of rules for manipulating such expressions, once learned they can be used almost without thinking, and often the meaning behind them is lost. Thus, instead of a rigorous approach, I will try to provide some bits of intuition, specifically focused on what makes e such a “special” number.

Integer Exponents

Exponentials first arise out of a need for a shorthand for multiplication (much like multiplication itself can be seen as a generalization of a shorthand for addition). Lets say we have a number which we’ve multiplied together a bunch of times to get . Since there are 6 ‘s in this expression, we decide to write it . The rules of exponents learned in high school are just a consequence of this notational choice. To see this, consider the number and . Since these are just short hand expressions for the product of four ‘s and two ‘s respectively, it is easy to see what the shorthand of their product should be:

And in general, multiplying a product of successive ‘s and a product of successive ‘s simply gives a product of successive ‘s. In our shorthand then;

Which is nothing other than the standard rule for exponents. Now say that you have a number which happens to be a product of three ‘s, that is . Is there a “nice” way to talk about the product of with itself five times? If each is composed of three ‘s and we have five ‘s multiplied in succession, then another way to phrase this is simply that we have 15 ‘s being multiplied together. In the exponential shorthand;

This also extends arbitrarily; says to take the number and multiply it together times, and then take that and multiply it by itself times. Obviously copies of a set of objects placed side by side is just a list of things of length . This is then written in our exponential shorthand as .

This idea of using exponents as a short hand for repeated multiplication has turned out to be quite useful, so lets see how it extends. As of now our preliminary definition is restricted to positive integer exponents (because it only makes sense to multiply things together an integer number of times). What about negative integers? If positive integer exponents give us a short hand for repeated multiplication, could it be possible to define negative integer exponents to be a short hand for repeated division? Much like means “multiply by itself five times”, then would signify dividing by five times. This turns out to work well with the exponent rule above: say you multiply by five times and then divide by three times. This is obviously equivalent to only having multiplied by twice, so in effect we would like it to be true that . But by our rule for dealing with this short hand, we already know that , so it all checks out! This gives some confidence that there is no real issue with extending the exponential short hand to both positive and negative integers, allowing it to represent both repeated multiplication and division.

This also allows us to assign meaning to the idea of “a product of no ‘s at all”. Since in our shorthand this would be represented by , as of yet we have not explicitly given it a meaning (we have only defined what it means to raise to the power of a positive or negative integer). It might seem that this is a case we should just ignore, for when are we ever going to need a product of zero ‘s? However, if we expect to take this notation seriously then the notion of is forced upon us naturally: it is the symbol we would use to represent first multipliying by and then dividing by ( as this would be written ). In fact, it is the symbol which represents multiplying by itself times and then going on to divide by copies of for any . Since , it follows that to be consistent we must say that is just another symbol representing the multiplicative identity, which gives us the nice interpretation “multiplying something by a product of no ‘s dosent do anything at all”:, or in symbols .

Thus, when taking integers as input; exponentials can be thought of as just a way to save paper (long strings of ‘s aren’t very enlightening to write down). Theres no real new information here, and the rules of exponents are just a way of codifying the nature of repeated multiplication.

Rational Exponents

Given our current view of exponentials as just a shorthand for multiplication; it might seem silly to try and stick a meaning to something like . However a lot of interesting mathematics falls out of trying to extend things that appear useful and simple. Its good to keep in mind that right now, the above expression doesn’t make any sense. Until we give it a meaning, its just a collection of symbols. To avoid jumping the gun, we will first try to define a simpler concept: a number raised to a fractional power. In fact, it would probably be easiest to start with an example: let’s try and give meaning to the expression . We would like everything we do in extending the exponential notation to be compatible with the case where it is intuitive (integer exponents), so in particular we would like . That is, to be consistent we need to be the number that when multiplied by itself gives . This number already has a name; the square root of . Thus we have a guess at a possible extension: .

What about sticking other fractions in exponent? If we say that is some general fraction, then we can write for two integers ,. Because we want our extension to be consistent with the rules from before; we can write Thus the number can be identified with the th root of . This gives us a nice way to define all rational exponents. Any rational exponent of can be looked at as being copies of the th root of multiplied together, for some ,. This lets us look at an expression like and know that it simply means to take the cube root of , and multiply it by itself 14 times.

Without going into any depth (this is only supposed to help provide intuition, after all), we have a pretty good grasp on the exponential function. Positive integer exponents are just a shorthand for repeated multiplication. Negative integer exponents are just repeated division. Exponents of the form are just an alternative way of writing the th root. By combining these simple cases, we can find the value of any rational exponent.

Real Exponents

What about the irrationals, though? The real line is chalk full of ‘em; so aren’t we missing a lot here? Well, yes and no. The good thing about the rationals are they are everywhere…pick any little piece of the real line, no matter how small you’d like, and there’ll be a rational number in it (actually, there’ll be infinitely many of them). So from an intuitive standpoint, it’s probably easiest to think of the exponential as having a nice interpretation on the rationals, and then just being forced to take the correct values on the irrationals to “fill in the gaps”. This can be made more precise by noting that is separable (it has as a countable dense subset ) and so the values of any continuous function over are totally determined by the values it takes on (which, in our case, have been completely described). Thus, the value of something like is forced on us because we know the values of a raised to the power of all rationals nearby , and this is as precise as we will need.

The Laws of Exponents: Geometrically

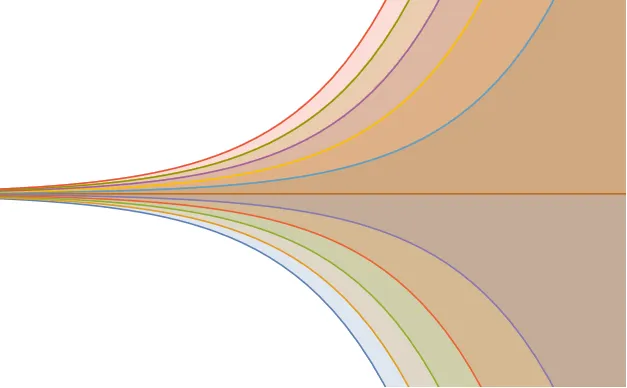

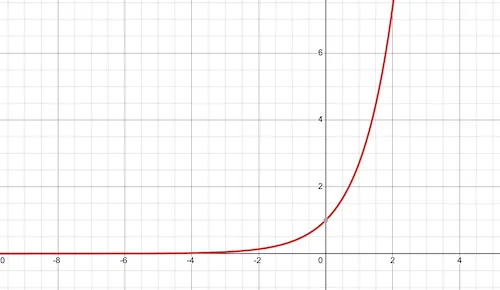

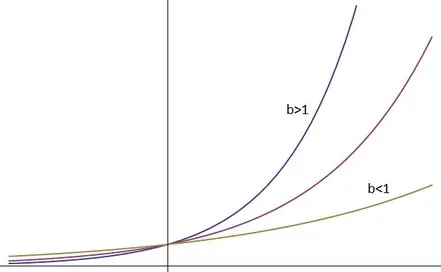

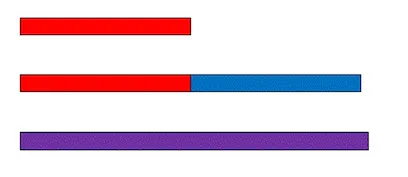

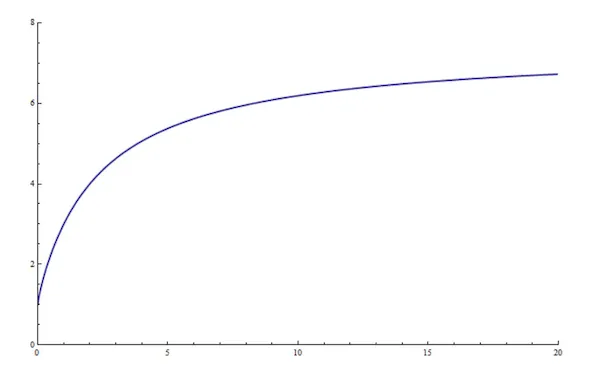

Once we begin thinking about real number exponents, we can think of the exponential in terms of its graph, and try to reason about it from that perspective. What does the graph of an exponential function look like? Well, generically they look like this:

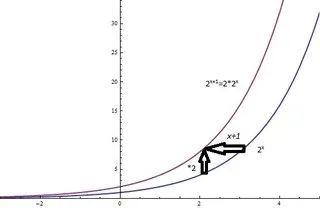

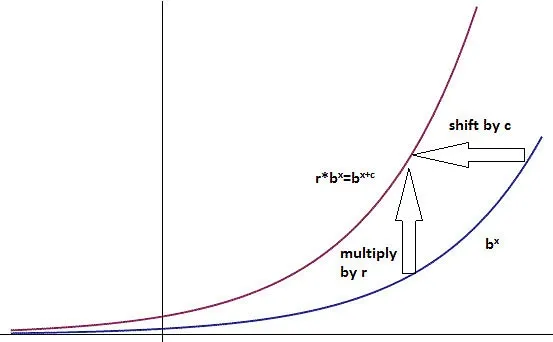

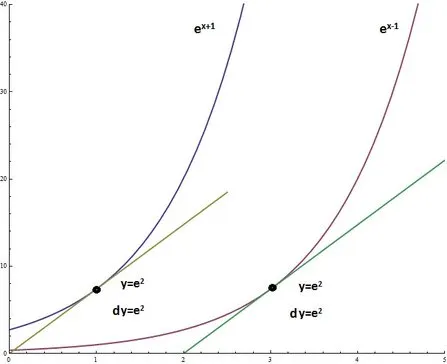

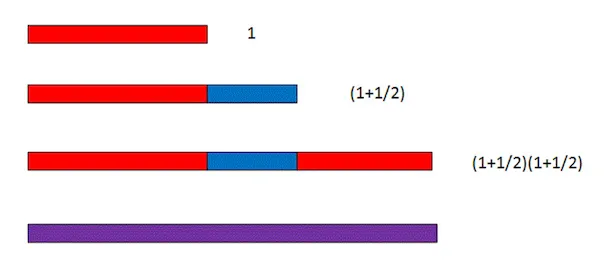

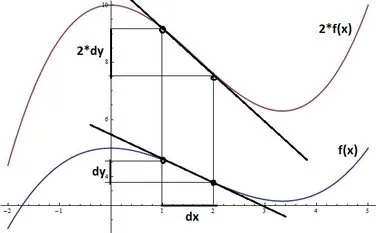

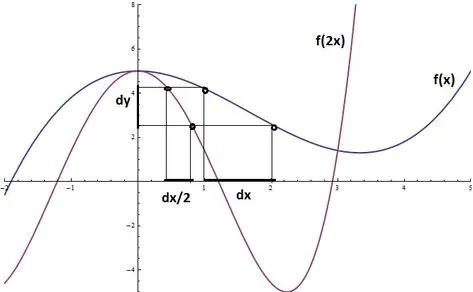

But this isn’t just any old upward moving squiggle with a horizontal asymptote to the left: the properties of exponentials we discovered above force the exponential graph to have some very special properties. For instance, how can we interpret the rule geometrically? As an example, lets take a=2. What happens if we multiply each point on the graph by two? This would amount to a vertical stretching of the graph, moving each point twice as far from the x axis as it originally was. Algebraically, this new function is , which by the laws of exponents can be rewritten as . Quick recap: our original function was , and we doubled it to get . However this is simply the original function shifted to the left by 1. Thus stretching vertically by a factor of 2 produces the same result as shifting the graph to the left by 1. This is shown below.

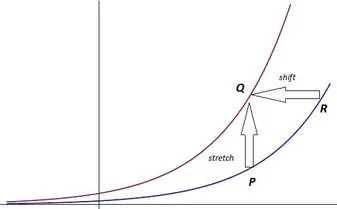

This is a special property of exponentials; their self-similarity. Stretching one vertically is equivalent to translating it horizontally instead. Lets see to what extent this is true. Lets pick some arbitrary exponential , and some point on its graph (this is the blue graph in the figure below). If we let denote the x-coordinate of , the y-coordinate of is simply . Now lets stretch this entire graph vertically by a factor . The new graph, is purple in the below figure. If is a positive number, then we can find some number such that . This allows us to re-express as , or equivalently . Thus we have the relation If we let denote the point on which is the stretched image of , this gives us a second way of getting to from the original graph: we can start at the point on the x axis, and move vertically to a point on . Shifting this point horizontally units to the left gives the point , which is a translated image of .

The geometric interpretation of the first “law” of exponents can then be stated as follows: if is some exponential function, then a stretch of the form is equivalent to a translation of the form . This is a property that can be observed on a graphing calculator or with a computer algebra system: as you zoom in on an exponential curve, provided you scale the axes right, it looks the same at any size. This self-similarity is the basis of the “memoryless” property of the exponential distribution often quoted in statistics, and will help us understand exponential derivatives in a bit.

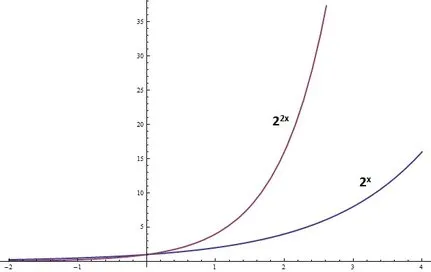

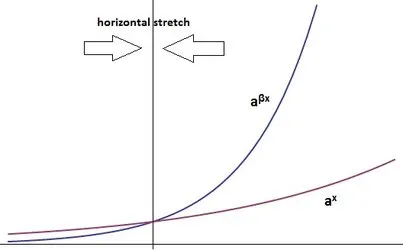

We have taken a look at what happens when we stretch an exponential vertically, but what about if we stretch it horizontally? A horizontal stretch is a stretch of the x-axis, and so we can accomplish it algebraically by replacing with in our formula. For , this corresponds to an expansion, and is a contraction, or squishing of the graph about the x-axis origin. This is illustrated below.

From the above pictures, it appears reasonable to assume that a horizontal stretch (either compression or expansion) produces yet another exponential function. To take a concrete example, lets go back to our old friend . This graph can be horizontally compressed by replacing with , giving . These two exponentials are shown below.

Because throughout our extension of exponentiation from the integers to all real numbers we forced the identity to hold, we can use this to re-express our compressed exponential in a more insightful way. In terms of the example,

And so a horizontal compression is equivalent to a change of the exponential base. Given an exponential , the effect of a horizontal stretch is really just a change of base. If we let stand for the value , then a stretch of factor is equivalent to the change .

Thus we have two ways to look at the rules for manipulating exponents: either we can view them algebraically as simply facts about what must be true for integer exponents to make intuitive sense, or we can view them as statements about how vertical and horizontal stretches of an exponential graph really end up resulting in translations or changes of basis. The ability to get from one exponential to another by two quite different means is a pretty useful property for intuition: in fact, these properties allow you to look at any exponential whatsoever as just a squished copy of some other exponential. To see this, we are going to need to know a little about undoing exponentials; so i’ll pick up on this train of thought again in a bit.

Logarithms and Rewriting Exponentials

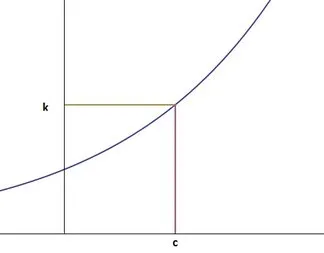

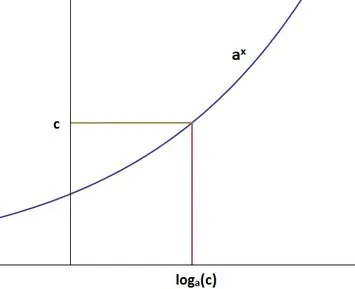

In the last section at one point, we had a number , and we claimed that it was possible to rewrite this as for some . Why can we do this? A quick picture will help here; plotted below is an arbritrary exponential curve, with some number selected along the y-axis. Looking at the corresponding x value (call it ) we notice that for this particular choice, as required. So long as , this argument applies in general; its easy to see that there will always be some corresponding x value.

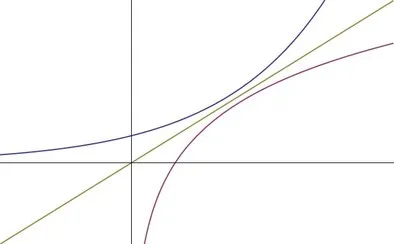

Finding such a for a given is an inverse problem (given the output of the function, , find an input such that ), and thus we are in need of an inverse function for the exponential. Since all exponentials are bijective functions, they are invertible, and we can actually do this. Geometrically an inverse function is simply the reflection of the original function over the line ; a graph of both an exponential and its inverse is shown below.

To not wander too far astray, we won’t spend too much time worrying about the inverse function, hopefully the above illustration is enough to provide some feeling that it exists (the proof is simple; just verify the injectivity and surjectivity of the exponential as a map ). Now that we know there is one, to be able to discuss it precisely we need a good notation for it. If we have the exponential , then we will denote its inverse by , and call such a function the logarithm with base . This function is defined exactly like the first picture in this section; if and are numbers such that , then . Pictorally;

Ok, we’ve covered enough stuff now to discuss how the self-similarity properties of exponentials allow us great freedom in how we write them (and interpret them). Lets say for some reason or another, you’ve found a particular exponential function that you really like, call it . You’re working on some problem and another exponential shows up; something that superficially bears no resemblance to your favorite member, we’ll write this guy . There might be good physical reason that this exponential has the form it does, but no matter; whatever the source of this particular exponential there is a way to interpret it as simply a stretched copy of your . To see this, lets first consider the form of the exponential you were given. It is the simple exponential , after a horizontal shift of units to the left. We know from previously that a horizontal shift is really just a vertical stretch, and so we can think of as being , for a vertical stretch factor (this stretch factor can easily be seen to equal ).

Now what about the remaining exponential? It is still the wrong base; so we need a way of viewing as some stretched copy of . We saw previously that change of base is really just a horizontal stretching, so we know that theres a way to write in the form , for some horizontal stretch of magnitude . The value of satisfying this can be found using the exponent laws: we want to satisfy the equation , so we need . If we now view as a variable in the exponential function with base , we have a situation that has already been discussed: we know the y-coordinate of some point on the exponential curve , and we want to find the x coordinate. This can be accomplished using the inverse function; giving us our answer

Thus we have the formula and the values of and which satisfy it, allowing us to view our arbitrary exponential as really just , stretched horizontally by and vertically by . Below is an animation of how the two stretches in unison actually achieve this:

Exponentials and Growth

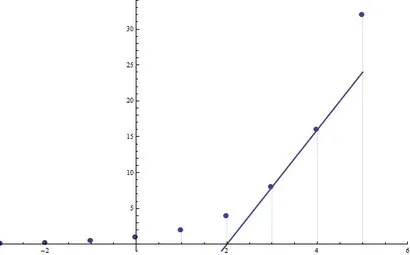

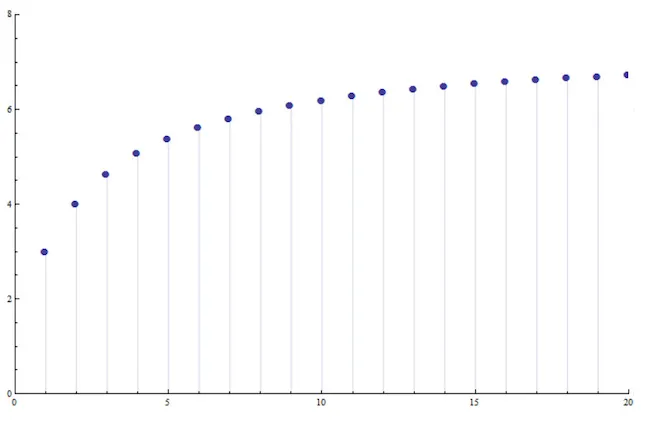

Our first introduction to exponentials was their discrete nature, as an extension of multiplication. However this is not the only situation in which they arise, heck it’s not even what makes them most useful. Passing from an algebraic shorthand to their continuous case left us with a set of functions over with some pretty cool properties. One of the most useful interpretations of exponentials is that of growth, they are the natural choice of a mathematical model for a kind of growth which depends on how much “stuff” is already present. To see this, we will first take a step back and consider the nature of growing things in general. The simplest kind of growth is linear growth: say each day you are given a dollar from your friend. After 5 days, you will have 5 dollars, after x days, you will have x dollars. Your riches grow linearly with time. The amount of money you will make tomorrow doesn’t depend on how much money you have today; regardless of if you are broke or a millionaire, you will make one dollar tomorrow. Now lets consider a different scenario: someone gives you a dollar, and you invest it in some ridiculous company which promises to double your total investment each day. So, the next day they give you a dollar, doubling your current amount. The second day however, they give you 2 dollars, so you now have a total of 4. The following day they double that, giving you 4 so that you have 8. Continuing this process, you see that your dollar amounts each day form the list {1,2,4,8,16,32,64,…}, which is nothing other than the table of values for 2x. In this model of growth, the amount you earn tomorrow depends on how much you have today: if you have 1 dollar you will make a dollar, if you got a million, you’ll make a million. The striking difference between these two modes of growth can be seen below

Looking at exponentials as a form of growth allows a nice physical interpretation of the “vertical stretch = horizontal shift” we talked about: say you’re that lucky investor whose total investment is doubled each day. If you start on day 0 with 1 dollar, your income is modeled by . But what if you started by investing 8 dollars? your income on day 2 would be 16 dollars, making your total 32, your total the next day would be 64, then 128, and so on. In fact, each day you will be making as much money as the guy who invested a single dollar does 3 days later. Your income is simply his income shifted by 3 days. Thus investing 8 dollars to begin with (stretching the income function by a factor of 8 vertically) is the same thing as just having invested a single dollar 3 days earlier (shifting the income function horizontally by a factor of 3).

In fact, any growth process where your amount of growth is proportional to how much you have at the moment is a form of exponential growth. Tripling your total amount each day is the exponential , quadrupling amounts to . Heck even multiplying your total by (or any other random number) each day is modeled by the function . These examples have all been of the discrete case, but the same intuition carries over to the continuous case: if you have a growth rate which is proportional to the amount of stuff you have, the growth will be exponential. We can formulate this more precisely in the language of differential calculus: let be a function which for each time outputs an “amount”. At a given time , the rate at which y is growing is its derivative, . To say that the growth rate is proportional to the amount of stuff you have, is to say that the derivative is proportional to the function’s value at that point The functions which satisfy this are the exponentials that we have been discussing all along (I wont bother deriving why…the usual proof involves knowing that a certain integral comes out to be the natural logarithm; a fact that here doesn’t help much with intuition). Hopefully the analogy of this differential equation with the discrete case is at least suggestive that both should follow the same general trend.

The Special Number

It seems you can almost not hear of or work with exponentials without encountering that seemingly random irrational number, . It’s one of those things that is mystifying the first few times you see it, but it comes up so often that before long it is second nature to use. However, during the learning process (at least in my experience) using for years never really gave a good sense of exactly what made it special. is singled out for multiple different reasons, which is probably why it’s hard to see any underlying theme when learning to use it.

First of all, you may ask, why would there even be any “special” base for the exponential? What has been discussed so far has been done in full generality; with serving as a placeholder for any base you could want. In this sense, it seems that all possible exponentials are created equal. Sure, we can pick some that we like and write all exponentials as just stretched versions of it; and this might be a very convenient thing to do (computationally you would only ever have to worry about one exponential function, and just feed it different arguments), but how could there be a natural way to select such a base? My goal here is to convince you that there is a natural choice, that the choice is , and that the various ways of defining this number actually share a common theme.

Lets start back with the idea of exponentials representing growth: out of all discrete growth rates, the one singled out as “special” is the unit growth rate; or an increase of 100% of your amount each day. This is our friend from before, but lets see why. As we argued before, discrete exponential growth is a process where the gain over each unit of time is proportional to the current amount. Say the current value on a given day is . Since the gain on the next timestep is proportional to , we can write for some proportionality constant . The amount you have at the end of that next time step is then the previous amount plus the gain: . We can factor the current amount out of this equation, giving . This gives the total amount at a given time in terms of the amount at the previous time. Letting vary with ; Solving this recurrence relation gives Which gives the amount at all future times in terms of the initial amount in terms of a stretched exponential with base . Setting the rate of growth to unity (that is, making the proportionality above an equality), gives us the equation , which justifies the fact that discrete growth at a unit rate is modeled by .

The natural question is then, can we extend this notion of “unit growth” in some way to the continuous case? That is, is there any continuous exponential which we can say grows at unit rate? From our look at the discrete case we have learned two properties of unit growth which can be used to characterize it: namely that the proportionality constant between growth and current value is 1, or that at any instant in time the rate of growth is equal to the current value. How would we phrase these requirements in the continuous case? We saw before that if the growth rate is proportional to the value, we have the condition . Setting to unity in this equation gives us a differential equation for unit continuous growth: The solution to this equation will then be the function which we can say grows with unit speed. Since we know by the equation’s form that it is an exponential; all we must do is find which base satisfies it. For lack of a numerical value, lets call the base . Stepping back from our current question about growth rates and looking at the equation itself, it turns out we have run into another quite interesting problem. This equation selects functions which are their own derivative. That is, unit growth is equivalent to the condition that the differential operator have no effect on the function. Without considering our reasoning that got us to this equation, it may seem unlikley that it has a solution at all: after all, if it does that means that there must be a function out there which remains invariant under differentiation. However phrased in terms of growth rates, we know there are exponentials with growth rate less than 1, and those of growth rate greater than 1; so it makes intuitive sense that there should be some exponential in between, with growth rate of exactly 1. Thus for now, we are going to assume this equation is solvable, and see what we can learn about the qualities of its solutions.

Looking at the differential equation, we see that it is first order in . Without initial conditions, this implies that there is a 1-parameter family of solutions to this equation, which we will index by and call them . Also, this equation only mentions the derivative at t and the y-coordinate at t, never the actual t value at which they occur. Because of this, we can see that given a particular solution of this equation, say then is also a solution (use the chain rule).

This tells us that the solutions to this equation are all related to each other by the translation of one basic solution to the left or right. Since we additionally know the solution must be exponential, we can use our geometric interpretation of the laws of exponents to notice that shifts to the left or right are equivalent to vertical stretches. This lets us write our one parameter family of solutions as

How do we go about finding the value of this base ? Lets look quickly back to the discrete case for some help. Considering our unit discrete growth function of , look at the discrete analog of the derivative at a point, the slope of the secant line defined there. At a given , we have

Thus, the secant line at a point is equal to the value of the point (sounds rather like the differential equation we have constructed for the continuous case!). Lets see if we can’t phrase this somehow in the continuous case. Instead of starting with the full blown differential equation, lets consider the slope of the secant lines of a graph as they approach the derivative. To match the discrete case (and follow our differential equation) we want Considering at first finite values of h, we have a sequence of secant lines whose slopes approach the derivative of our graph at x.

Lets see if we can use this equation for the slope of a secant line to solve for the correct base e. Muliplying through by and dividing by ; This equation can be solved for as Of course this whole time we just require that this is true in the limit, so we have a definition of : Calculating this numerically, we get .

and Growthrate

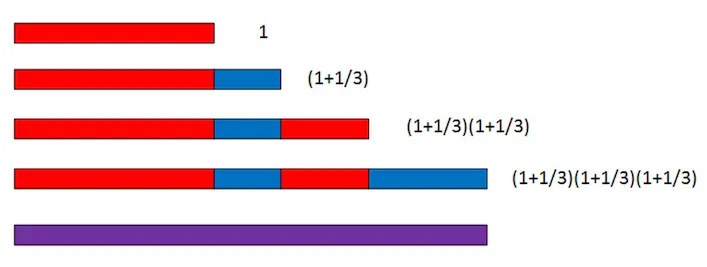

To try and get some feel for another reason e is special, lets look at what happens when you have 100% growth divided over a unit time. The simplest case (that of no divisions at all) is just the discrete case from before: starting with 1 at t=0 after one time step we have a value of 2.

What happens if we break the time step of the discrete case in half, first increasing by 50%, and then taking the output of this process and increasing it an additional 50%? This still gives 100% growth, but broken up into pieces. After this process; we have a final amount of

Which is greater than the unbroken doubling which results in 2. What about breaking the time step into three pieces, and growing at a rate of 1/3 over each period? The result of this process is below:

This is even larger than the previous division, which raises a question: does dividing 100% growth over the unit time interval diverge as the number of divisions tends towards infinity? If we divide the unit time interval into n pieces, and grow at a rate of 1/n during each step; we reach a final value of

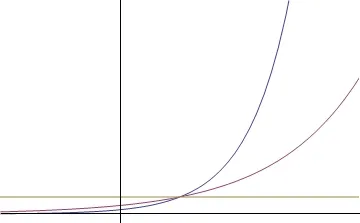

For n=100, here are the bars analogous to those above

As the bars get more numerous, exponential growth begins to appear. Changing the value of n does not add more terms to the end of this sequence, but rather refines the sequence, making each individual bar smaller. The limit of these bars to zero width is then the model of continuous 100% growth that we are seeking. Looking over a wide range of n values, it appears that the value after unit time is tending towards a fixed number. Assuming this indeed happens, then breaking 100% growth into pieces and distributing it over smaller and smaller intervals results in a value of

after one unit time step. But this is exactly the same limit we originally discovered with differentiation, just disguised! Recall there Replacing with means as we must have , and this limit transforms into our newfound one. Thus, the constant of continuous growth is exactly the same found this way, as via calculus.

The Utility of as a base

We have come across a few different mathematical reasons that select e as a rather special base for the exponential function; but it’s most useful property is yet to come. If e represents the result of continuous 100% growth, what is the result of 50% continuous growth over an interval? Looking back at the above limit for e, we can see that breaking the original 100% up over n time steps resulted in the formula

Likewise, distributing 50% growth over n time steps would produce a final result of

Lets take a quick look at what this converges to as , this comes out to approximately 1.6482. Is it a coincidence that this is precisely the square root of e? Definitely not! Let’s see why: To get to the limiting value of this expression; we don’t need to consider all the terms; the limit just asks what happens “at infinity”, so as long as we consider at least some of the terms way out there; we can see what happens (this can be made more precise by noting that since the original sequence is convergent, so are its subsequences). Before jumping into the whole subsequence stuff, lets just look at a relatively “big” term, by setting .

We can re-write this as follows:

The last term in this sequence looks a lot like one of the terms from our original sequence for e, except the exponent is divided by two. To make sure what we are witnessing here is something real and not just a coincidence; lets consider a much larger term: .

This time we end up with the 10,000th term in the sequence for (except we also have that factor of in the exponent). Using what we know about fractional exponents however, raising something to the half power is the same as taking the square root. So these are just the square root of two terms in the sequence for . If this pattern continues; then we can show that the base for continuous 50% growth is really just the root of , as was suggested numerically.

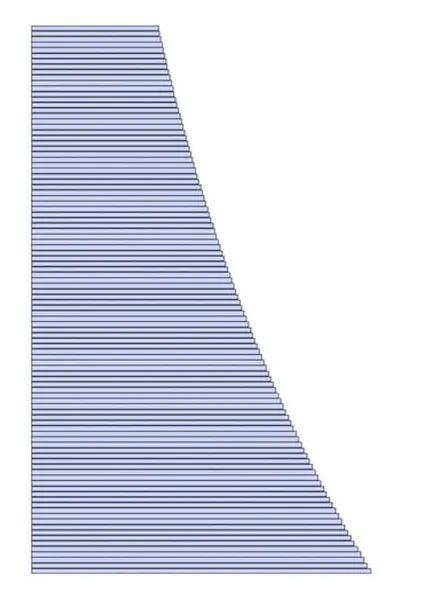

This is a remarkably useful fact: the base of the exponential which represents 50% continuous growth is simply . More generally, continuous growth at a given rate has for an exponential base . Using the above argument as a guide, we can show this rather simply. For a given , which we will interpret as the growth rate, we will construct a sequence of terms limiting to continuous growth at rate . Like before, we will go about this by dividing evenly over the unit interval and limiting to infinity, producing the sequence

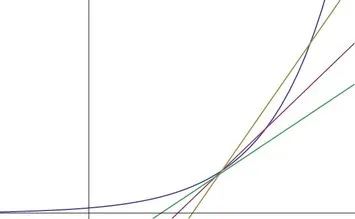

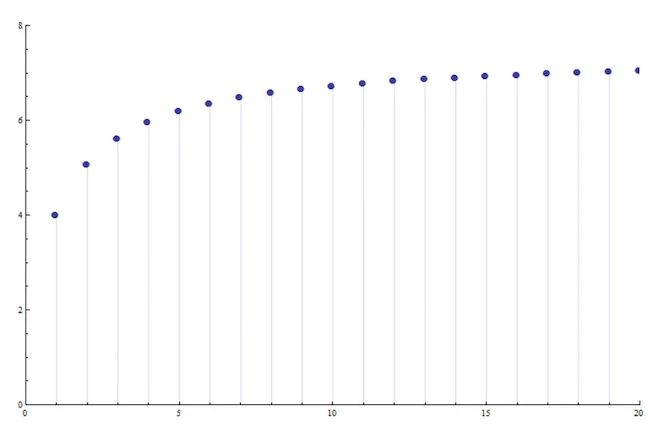

Here’s a plot of the terms of this sequence for some example value of :

Actually computing this limit by hand for arbitrary is challenging, so we perform a little trick. Since the natural numbers are just points along the number line, we can extend our limit from just being over to being over real numbers as :

Here’s a plot f the continuous extension:

Our original limit is a subsequence of the continuous limit, and so we can choose a different subsequence if we would like and be guaranteed that it will converge to the same limit (since the overall continuous limit exists here). To choose a different discrete subsequence, instead of counting upwards by integers, lets count upwards by multiples of . The new variable in the limit will then be for an integer.

Here’s a plot of the continuous curve resampled at these new points

Now, we just have to compute this limit in terms of things we already know, and our earlier example with provides a guide. Indeed cancelling the s on the inside fraction,

But we know the quantity in the brackets is ! So, this limit is precisely . Although it was a lot of work to reach this step; it was well worth it. This provides possibly the single most compelling reason that shows up everywhere: it is not only the base representing 100% continuous growth, but continuous growth at any rate can be represented simply in terms of it, by simply raising to the power of that rate! Out of all possible exponential bases, is the only one with this property. It definitely makes sense to call the “natural” base.

Differentiation of Exponentials

It is again due to the properties of e that we have such simple formulas for the derivatives and integrals of an exponential function. The simplest case of course, is just that of , where we have the nice formula Because all exponentials can be written simply as stretched copies of this curve, we need not even consider how to differentiate them individually. Instead, we can just focus on the derivative of , knowing that we can determining the appropriate values of the constants using some of our previous arguments. This is of course nothing more than a simple exercise of the chain rule; but we will try and go about it somewhat differently to develop some intuition.

The derivative of a function measures it’s rate of change in the vertical with respect to the horizontal (thus the notation ), so the effect of a vertical stretch is simple to understand. If we multiply a function by a constant , then we have replaced the y coordinate of each point on the graph with a point times farther from the x-axis, but we have left the x coordinate unchanged.

If we view the derivative as the change in y relative to the change in x, we note that y changes a times as fast as before, whereas x changes at the same rate. Thus we would expect the change of our new function to be times greater than before. In symbols; this is one of the properties that makes the derivative a linear operator: In the case of our exponentials, a vertical stretch equates to starting with a different initial amount. The above property can then be interpreted as saying that starting with an amount a causes the rate of change of your function to be a times greater than before. This makes perfect sense in our scheme due to the already-noted fact that exponential growth is characterized by the growth being proportional to the amount available. Since there is a times as much stuff, then it grows a times as quickly.

Now what about a horizontal stretch? Going back to thinking of the derivative as a limit of the ratio “y-change to x-change”, we can see that a horizontal stretch leaves the y-coordinate unchanged, but stretches the x-coordinate. If we compress the x-coordinate by a factor of 2, then all of the y-coordinates will be brought twice as close together: that is our x-interval will be half the size that it was before the compression.

Because we are looking at the ratio y-change to x-change, if we fix our y-change to be the same, then our x-change is half of what it was, and the ratio is doubled (there was a 1/2 in the denominator). In general, if we stretch our x axis by a factor of , then for the same y-change, we have an x-interval which is as large; making our new ratio times the old. In symbols; this is the chain rule for constant coefficients: Let’s apply this reasoning to exponentials: a horizontal stretch of magnitude is equivalent to a change of base in general. However, if we choose the base to begin with, then a horizontal stretch of can be re-interpreted as the rate of continuous growth. Applying the above rule for the natural exponential This simply says that if something is continuously growing at rate , then its growth at time is equal to that rate times the amount currently available.

From this we can construct a formulation for the derivative of a general exponential : a simple horizontal stretch will allow us to write this exponential with base e. As was discussed before, the inverse function of will provide us with the correct stretch. Where, to stick with the standard notation in calculus we will write as . This provides us with useful information even beyond just the ability to take the derivative, saying that something grows at rate is the same as saying it grows with rate . This interpretation of all different exponential bases simply being horizontal stretches of the natural base also gives us the following form of their derivative which just says the rate of change is the growth rate times the current amount.

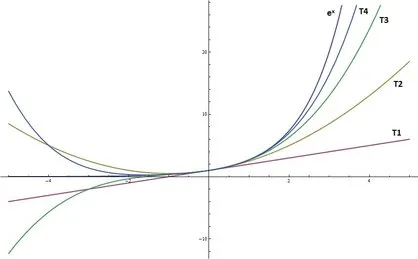

Computationally, one of the main advantages of lies in the fact that it is its own derivative. From this its easy to see that the second derivative of is also itself, as is the 3rd or any higher derivative. Most notably, every derivative takes the value 1 at x=0. To see why this is useful, consider the Taylor series of a general function . If is analytic, we can express it in the form Using the fact that for all when , we see that is the function with the simplest Taylor series: The plot below shows the convergence of the first 4 terms in this series towards the exponential:

This provides us with yet another means of calculating the value of e; one that turns out to be quite efficient: setting x to 1 in the above formula yields which is much simpler to compute than the previous limiting definitions.

Although more difficult to think about intuitively, this Taylor Series may be taken as the definition of if you like. In fact, it is one of the most useful definitions to keep around, because it is easy to extend the meaning of the exponential to other domains, (e.g. what does e to the power of a matrix mean? e to a differential operator? e to the imaginary unit?) but we wont deal with it much here, extending the domain of the exponential via the approach “plug it into the taylor series and see what comes out” doesn’t really provide much intuition behind why the extension behaves the way it does.

Summary

We’ve covered a ton of information here, so it’s probably a good idea to highlight the important bits (plus, this will show you how far we’ve come!)

- The “laws of exponents” themselves are just a consequence of our notational choice; namely “exponentiation is just a shorthand for repeated multiplication”.

- Negative exponents are a shorthand for repeated division

- Reciprocal exponents (of the form 1/q) are another notation for the qth root.

- Shifting an exponential horizontally is equivalent to vertically stretching it

- Changing the base of an exponential is equivalent to horizontally stretching it

- The unit base for discrete growth is 2.

- The unit base for continuous growth is e

- Starting with amount A and growing continuously at rate r is modeled by

- The exponential with base e is equal to its own derivative precisely because the proportionality constant between current amount and growth rate is 1.