The Fourier Transform

Decomposing functions the same way you decompose vectors — just in infinite dimensions.

Fourier series and the Fourier transform both give us ways of expressing different functions in a radically different way - instead of looking at a function for how it depends on the input - we look to see how it decomposes into basic oscillations at different frequencies.

My goal here is not to be rigorous, but to give some explanation and intuition for why the formulas defining the Fourier series coefficients and the Fourier transform have the form they do - as this was somewhat mysterious to me during my first pass at Fourier analysis.

The Goal

Fourier analysis began with Joseph Fourier’s realization that periodic functions could be written as an infinite sum of sines and cosines: With the coefficients given by the following formulas:

To make the similarity clearer with the Fourier transform however, we will take a more modern viewpoint and use the identity to write things a bit more succinctly:

The Fourier transform gives a pairing between certain non-periodic functions on the real line: any function whose square is integrable has a fourier transform, denoted defined by

Our goal, is to figure out what these formulas mean, and where they came from.

In Words

Let’s begin by trying to read these formulas in words. One formula from each pair isn’t that bad: looking at Fourier series first, says that any periodic function can be written as an infinite sum of complex exponentials (sines and cosines) with different coefficients (think of these as “weights”) on each. While perhaps unbelieveable (what?! you can write every periodic function as a sum of nice smooth oscillations?!) its at least an understandable claim.

The same holds for one of the formulas from the Fourier transform pair:

This says that every square integrable function on is actually the integral of some other function against a complex exponential. But interpreting the integral as a continuous sum, this has the same content as the formula above: it says every function may be written as a sum of weighted complex exponentials.

Ok, so what about the weights? According to our interpretation above, the numbers and are supposed to tell us what weight we should assign as a component of :

These also appear to say the same thing in spirit: the amount of the frequency ( in the function is given by some multiple of an integral of against . But why should this measure the amount? It turns out there’s a good reason, and to see it - let’s take a detour into linear algebra!

Vectors and Bases

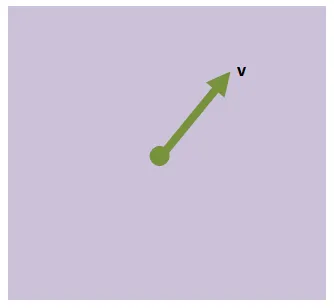

To keep things simple, lets start out with a vector in . Call this guy . Without choosing a basis, we don’t have any convienent way to express other than by its name.

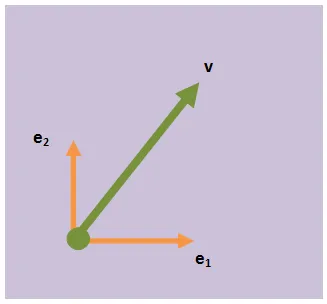

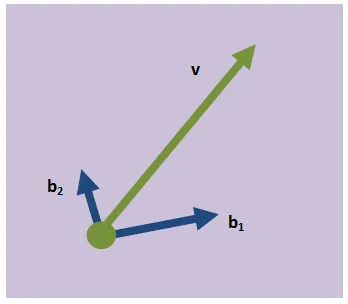

To help us easier express and other vectors we might care about, let’s bring a set of basis vectors into our picture. For now, let’s stick to the standard basis and .

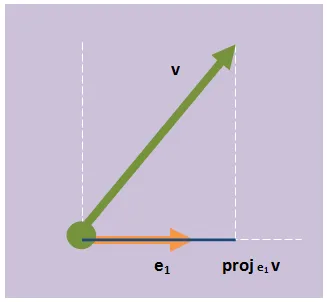

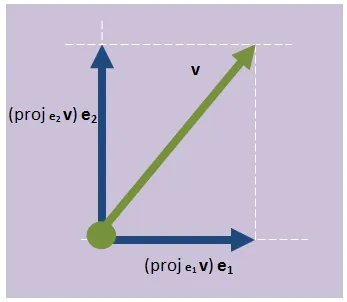

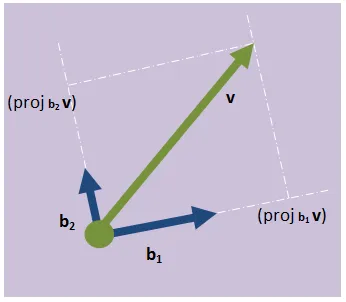

To write v in terms of them, we need a way to somehow say “how much” of is in the direction of each. Let’s look at how to do this for . Let’s say for a second we have a “projection” operation, which takes v and “squishes it down onto . We will call this . Now lets do the same for . We now have the “amount” of in the direction of each of our basis vectors, so how do we go about using this information to describe the vector? Well, we used the projection operation and the basis to “take our vector apart”, so let’s put it back together! Multiplying each basis vector by the projection amount gives us two vectors, and adding these guys together gives us back.

In symbols, . For now, we will do away with the projection notation, and simply refer to the components as the first and second components of . That is, we will say that , which is often abreviated .

So, we have a nice coordinate representation of our vector, which we were able to construct by projecting our vector onto each basis vector individually. Now, what happens if we don’t find the “standard” basis particularly convenient for our problem; and we would like to look at from a different perspective? Well, we can always just choose a new one, say this basis:

(throughout this, we are only going to consider orthogonal bases, because that is all we will need to make the analogy with the Fourier Transform). We want a representation of our vector in terms of this basis? No problem; we can just project onto each one like before.

This lets us express in a new way: , which we could write as or if the basis was understood.

These two descriptions of our vector have different numerical values (as they should since each describes the vector in terms of the “amount” of it in a different direction), but there is no question they represent the same vector. From both representations it is simple to recover , and in fact you can convert between the two representations with ease (think change of basis matrix).

Projections

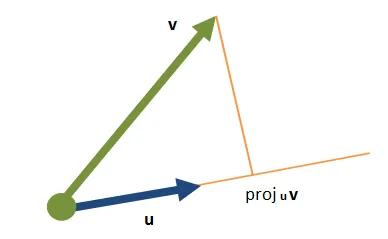

Let’s take another quick detour and look at how to define the projection operator. We’ll look at two vectors, in and determine how to project onto .

If we look at this as a triangle, we know the length of the hypotenuse (its just ), and the projection we want is the length of the bottom side. If we know the angle between and ; we can express this length as Convienently, this actually looks quite a bit like the geometric formulation of the dot product between two vectors. Recall that the dot product of two vectors satisfies the relationship for the angle between them. Thus, our sought-after projection operator is none other than a rescaling of the dot product! And so we’ve managed to kick the can down the road a little farther: we wanted to understand vectors so we wrote them as projections onto an orthogonal basis, then we wanted to understand projections so we wrote them in terms of the dot product. I bet you can guess what we have to take a look at now…

The Dot Product

As our goal is eventually to understand the formulas of Fourier, it will be helpful here if we don’t continue to restrict ourselves to . Lets say we have two vectors, in which we can express in the standard basis as Their dot product is then given by the formula So, in a coordinate representation, we can say that the dot product is the sum of the products of each coordinate. There is one more important point here: we obviously want this new definition of the dot product to agree with the geometric one; and if we allow our vectors to be complex numbers, to accomplish this we need to take the complex conjugate of one of our vectors, replacing the above with this new definition: Where the overbar represents the complex conjugate. Obviously this definition reduces to the above if has all real components, but the only important part is that this is the “good” definition, in that it agrees with our geometric definition, and allows us to use the dot product to express projections. Now that we have a formula for the projection, let’s write out in full detail our decomposition of a vector into a sum of weighted basis vectors:

Just for the sake of being able to write things out explicitly, lets say that we know how to write in the basis , but we would like to be able to express it in another orthogonal basis . Then

where computing according to the components in the basis we have for the amount of in .

The Vector Space of Functions

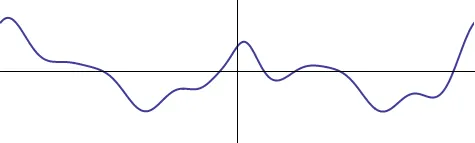

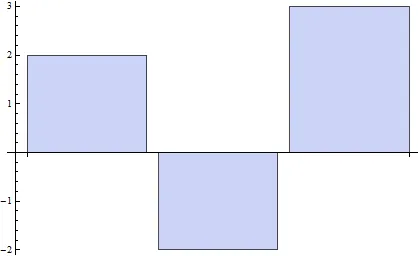

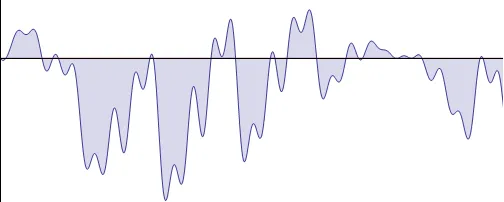

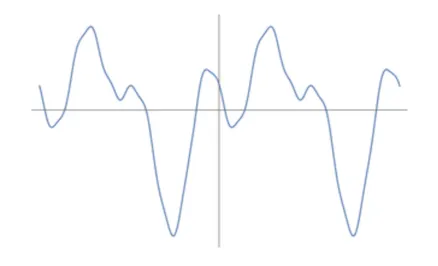

This detour into linear algebra is more applicable than it may have seemed at first - for our actual problem is taking place in a vector space! The space of functions from is a vector space as we can add functions and multiply them by scalars - the only thing that differs from our usual experience is that its infinite dimensional! Here’s a vector in that space.

You can think of this graph as a “coordinate representation” of our function f. To each distance from the origin x, we can say the xth coordinate of f is f(x). It’s going to turn out that to make sense of fourier analysis we are going to need to project a function like this onto a basis of sinusoids, so we should first talk about the dot product for functions. To figure out the right generalization let’s return to familiar linear algebra in finite dimenisons. We defined the dot product of two vectors in to be

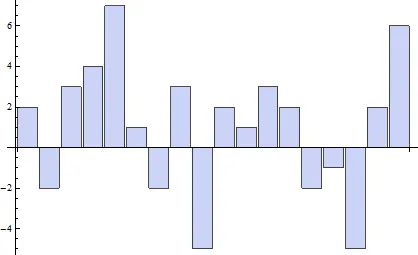

Take for example the vectors and . We can visualize this sum geometrically as an area: we make a bar-graph with three entires, one bar for each component, and the height of the bar is .

If we now go from 3D vectors to vectors of arbitrary length; the sum representing the dot product is made of more and more rectangles. Below we show three cases, 18 dimensional vectors 100 dimensional vectors, and 1,000 dimensional vectors.

In each case the dot products value is the net area under this bargraph. So, what happens in infinite dimensions? Here, every single point on the horizontal axis represents a coordinate (the “xth coordinate of f”), and so we must sum over every coordinate — over a continuum. Just like before, this continuous sum becomes an integral. Thus, like the dot product of vectors is the sum of the products of the coordinates; the dot product of functions (usually called the L2 inner product) is the integral of the products of the values:

However, we do have one more thing to clean up. This definition works fine in the real case, but for complex functions we need to make sure to take the complex conjugate (again, this is to make sure that the dot product still works how we want it to; that it lets us define a projection operator).

And finally, if we are working with functions on a bounded domain like instead of the real line, the integral only goes over these bounds (as this is the only spot where our functions “coordinates” are defined). For us, the important case is periodic functions on , which has the dot product

Projecting a Function onto a Basis

Now we are ready to really build the idea of fourier analysis. Starting with a function thought of as an infinite dimensional vector

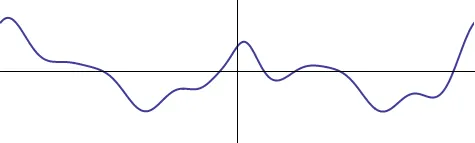

We want to write it in a basis - as a nice weighted sum of simple functions. And here, the simple functions are going to be oscillations. One could choose to use a basis of sines and cosines for the oscillations,

But we know the complex exponential is a better choice: it combines both into one, making our formulas shorter:

Different frequencies of oscillation are modeled by changing the angular frequency of the sinusoids, so for various values of . Following what we did above then, if we were to try break a function up into its component frequencies (modeled by the functions ) we would write something like this:

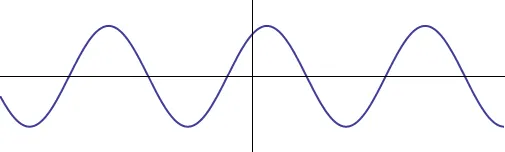

Of course, there are many things we need to make sense of in this formula still, but bear with me. First off, this sum is infinite as we had said above, but is it discrete or continuous (is it an honest sum, or an integral)? Well, that all depends on how many different frequencies we need to describe our function. If we are talking about a periodic function, much like a guitar string can only play discretely many sounds (it can either vibrate with zero, one, two, … nodes), there are only discretly many oscillations with that given period

Thus, in that case our sum will just be over the integers (representing how many times the function vibrates within the interval) and we have this to make sense of. However, if we are not looking at periodic functions but instead at all functions on the real line, there is no restriction on the period! All functions of the form could contribute, and so we expect to have to sum up over a continuum of contributions, making it into an integral: is what we should try and interpret.

In each case, this leaves us with two things to fill in: a value for and an expression for . Luckily we already figured out how dot products work in infinite dimensions, they involve integration and complex conjugation. And as the complex conjugate of is none other than we can see that depending on which case we are considering, the dot product is one of the below:

Which are almost identical to the formula for the Fourier transform and the coefficients of the fourier series! All we are missing is a normalization constant - which reminds us, we have forgotten still one part of the definition of the projection: - the size of the vector you are projecting onto. It turns out that in the periodic case it works out best to think of the “size” of to be , and in the non-periodic case, where its domain is all of , to be twice that. (There are better explanations for why these values are what they are, but I’m just trying to tell a memorable story here).

Thus, we have completely recovered the formulas for the Fourier coefficients - they are just the projections of our functions onto functions of the form - it really is just linear algebra (albeit of the infinite-dimensional variety).

Visualizing the Projection

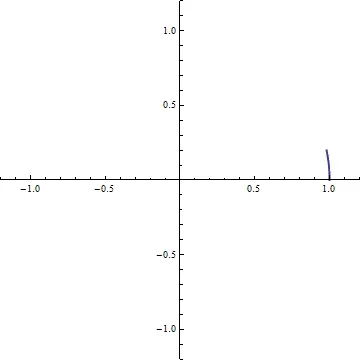

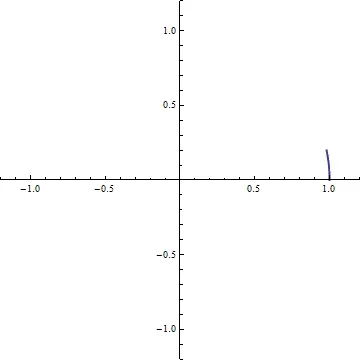

It turns out that the integral defining the projection here has a rather pretty interpretation I’d like to share quick before ending - remember that we can think of as a point spinning around counterclockwise on the unit circle, at constant speed k:

The complex conjugate, is just the clockwise version of this.

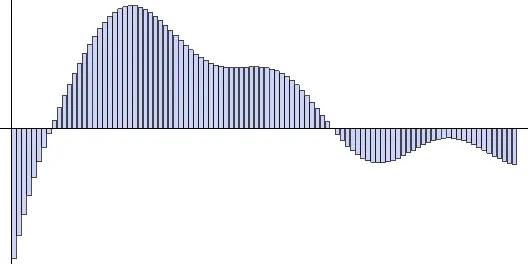

Remember that our projection integral is of the form , where is a real valued function - so the integral is just the polar form of a complex number: the distance from the origin is and the angle with the real line is ! It’ll be easiest if we do an example. Consider the periodic function

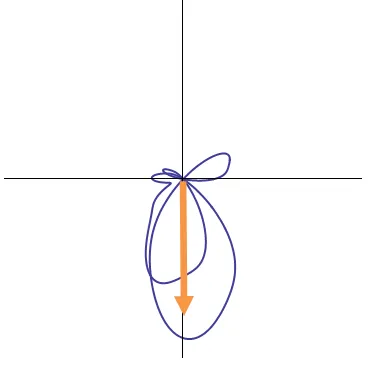

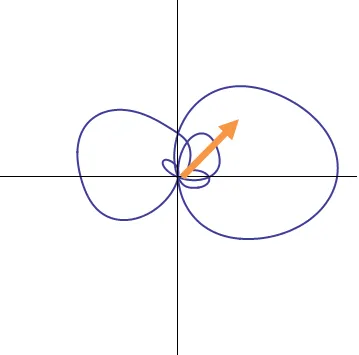

Multiplying this by corresponds to spinning it about the origin in at unit speed:

Integrating this over one period adds up all of the directions, weighted by the magnitude in that direction: the final answer is a vector in the plane (a complex number) representing the average direction that the spun-out curve points in

The net contribution of angular frequency 1 can then be seen to be represented by an arrow along the negative imaginary axis. The magnitude of this arrow tells us the amplitude of the contribution, and its phase (90 degrees from the real axis) tells us that the contribution is sinusoidal.

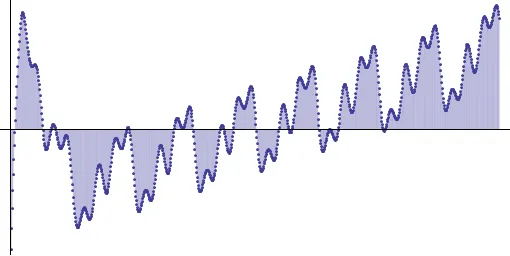

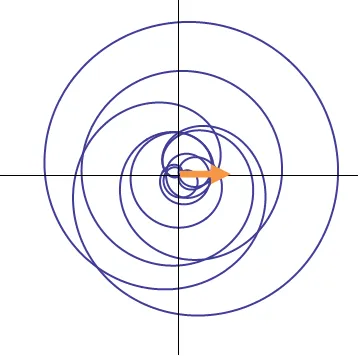

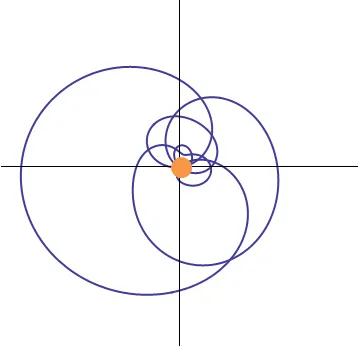

If sinusoidal contributions are represented by vectors along the imaginary axes, lets look at a cosinusoidal contribution: we will spin the function at an angular speed of 10 to single out the cosine at that frequency:

Adding up all the vectors along this spinning process yeilds the average, which is the projection

Thus, as we should have expected, the net effect is a smaller arrow along the real axis, representing zero initial phase.

Yet another case we can investigate is that of angular speed 2. In our formula, we have both sinusoidal and cosinusoidal oscillations at that frequency. From elementary trigonometry we know that the sum of these two is a single oscillation with an initial phase shift. For illustrative purposes, let’s perform our projection onto this frequency and see.

The net contribution is found to be phase shifted at 45o, representing a combination of both real (cosine) and imaginary (sine) components.

There’s still one more thing we can check out with this example: what if we spin our function at angular frequency 4? Looking back at the original function, there is no oscillations at that frequency present; so we expect the Fourier component at that frequency to be 0.

While the graph certainly moves farther out to the left, it spends more time on the right. And when we carefully add it all up to get the projection, we find precisely zero:

Summary

Here’s what I hope you take away from this article:

- Functions can be decomposed into frequencies, much like vectors can along bases

- The contribution of each frequency to a function is the projection of that function on the frequency

- Projecting on a frequency is accomplished by spinning the function at that frequency, and looking at the net effect

- The set of contribution amounts for each frequency in a function is called its Fourier Transform

- Re-constituting a function from its frequency components is called the Inverse Fourier Transform

- We can look at the coordinate representation and the frequency representation as two ways to get information about the same function; and we can look at their values as being the components in two different “bases”